RAG Performance Evaluation & Metrics (NDCG, MRR, MAP)+50

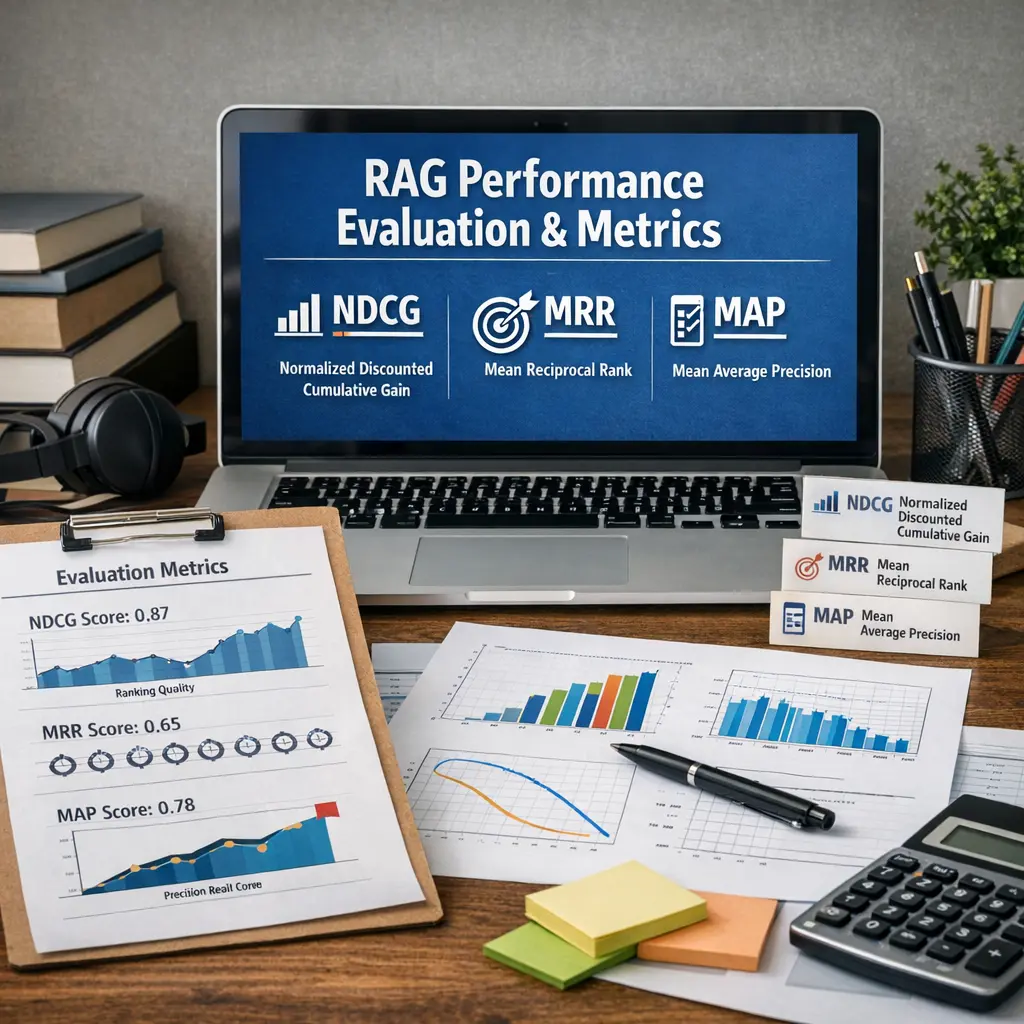

RAG Performance Evaluation & Metrics refers to assessing the effectiveness of Retrieval-Augmented Generation (RAG) systems using quantitative measures. Key metrics include NDCG (Normalized Discounted Cumulative Gain), which evaluates ranking quality; MRR (Mean Reciprocal Rank), measuring how high the first relevant result appears; and MAP (Mean Average Precision), reflecting overall ranking precision. These metrics help determine how well the RAG model retrieves and ranks relevant information for generating accurate responses.

RAG Performance Evaluation & Metrics (NDCG, MRR, MAP)+50

RAG Performance Evaluation & Metrics refers to assessing the effectiveness of Retrieval-Augmented Generation (RAG) systems using quantitative measures. Key metrics include NDCG (Normalized Discounted Cumulative Gain), which evaluates ranking quality; MRR (Mean Reciprocal Rank), measuring how high the first relevant result appears; and MAP (Mean Average Precision), reflecting overall ranking precision. These metrics help determine how well the RAG model retrieves and ranks relevant information for generating accurate responses.

💡 Key Takeaways

- Learn how RAG evaluation uses NDCG, MRR, and MAP to quantify retrieval and answer quality.

- Understand NDCG: graded relevance and position-based discounting to reward better-ranked results.

- Understand MRR: the importance of the rank of the first relevant retrieved item.

- Understand MAP: averaging precision across multiple queries to assess overall ranking performance.

- Consider practical aspects of RAG evaluation, including relevance judgments, dataset design, and metric selection.

❓ Frequently Asked Questions

What is Retrieval-Augmented Generation (RAG)?

RAG combines a document retriever with a generator to fetch relevant passages and use them to produce informed responses.

What does NDCG measure in RAG evaluation?

NDCG (Normalised Discounted Cumulative Gain) scores how well retrieved passages are ordered by usefulness, giving higher weight to items near the top. Higher NDCG means better ranking quality.

What is MRR and what does it indicate for RAG?

MRR (Mean Reciprocal Rank) averages the reciprocal rank of the first relevant retrieved item across queries, indicating how quickly a useful passage appears. Higher MRR is better.

What is MAP and how does it differ from NDCG and MRR?

MAP (Mean Average Precision) measures overall precision across all relevant retrieved items for each query and then averages over queries. It reflects total retrieval quality, not just the top result or ranking order.