Retrieval-Enhanced Instruction Tuning

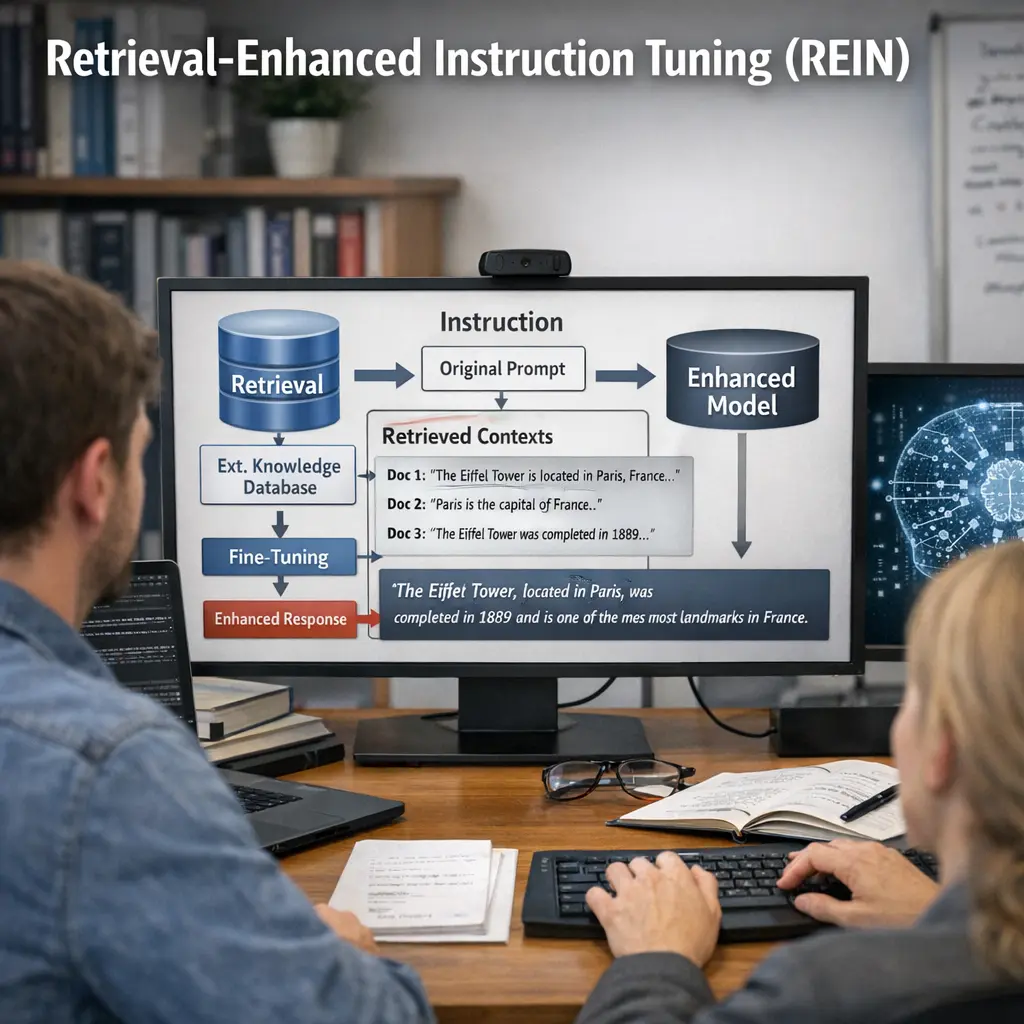

Retrieval-Enhanced Instruction Tuning refers to advanced techniques in Retrieval-Augmented Generation (RAG) where language models are fine-tuned using both instructions and relevant retrieved documents. This approach improves the model’s ability to follow complex instructions by grounding its responses in up-to-date, external information. By integrating retrieval and instruction tuning, models become more accurate, context-aware, and capable of generating reliable, fact-based answers for a wide range of tasks.

Retrieval-Enhanced Instruction Tuning

Retrieval-Enhanced Instruction Tuning refers to advanced techniques in Retrieval-Augmented Generation (RAG) where language models are fine-tuned using both instructions and relevant retrieved documents. This approach improves the model’s ability to follow complex instructions by grounding its responses in up-to-date, external information. By integrating retrieval and instruction tuning, models become more accurate, context-aware, and capable of generating reliable, fact-based answers for a wide range of tasks.

💡 Key Takeaways

- Understand how retrieval-augmented methods combine external knowledge with instruction-tuned models to improve responses.

- Identify the roles of the retriever, the knowledge source, and the instruction-tuned model in Retrieval-Enhanced Instruction Tuning.

- Learn how this approach can improve accuracy, reduce hallucinations, and keep information up-to-date by pulling relevant evidence.

- Understand the basic workflow: build a knowledge base, index and retrieve docs at training or inference time, and fine-tune the model with instruction data.

❓ Frequently Asked Questions

What is Retrieval-Enhanced Instruction Tuning (REIT)?

A training approach that combines instruction tuning with retrieval of external documents, letting the model use relevant knowledge during responses.

How does REIT differ from standard instruction tuning?

Standard instruction tuning trains the model on prompts using fixed data. REIT adds a retrieval step to fetch context from a knowledge source to condition answers.

What are the main components of REIT?

A base language model, a retriever (e.g., vector index), a knowledge source, and a method to fuse retrieved text with the prompt.

When is REIT particularly useful?

When you need up-to-date, domain-specific, or highly factual answers, such as research assistants or knowledge-base–driven support.

What are common challenges with REIT?

Potential latency from retrieval, quality and relevance of retrieved content, handling outdated or conflicting sources, and added training/evaluation complexity.