Similarity Metrics: Cosine, Dot, L2+50

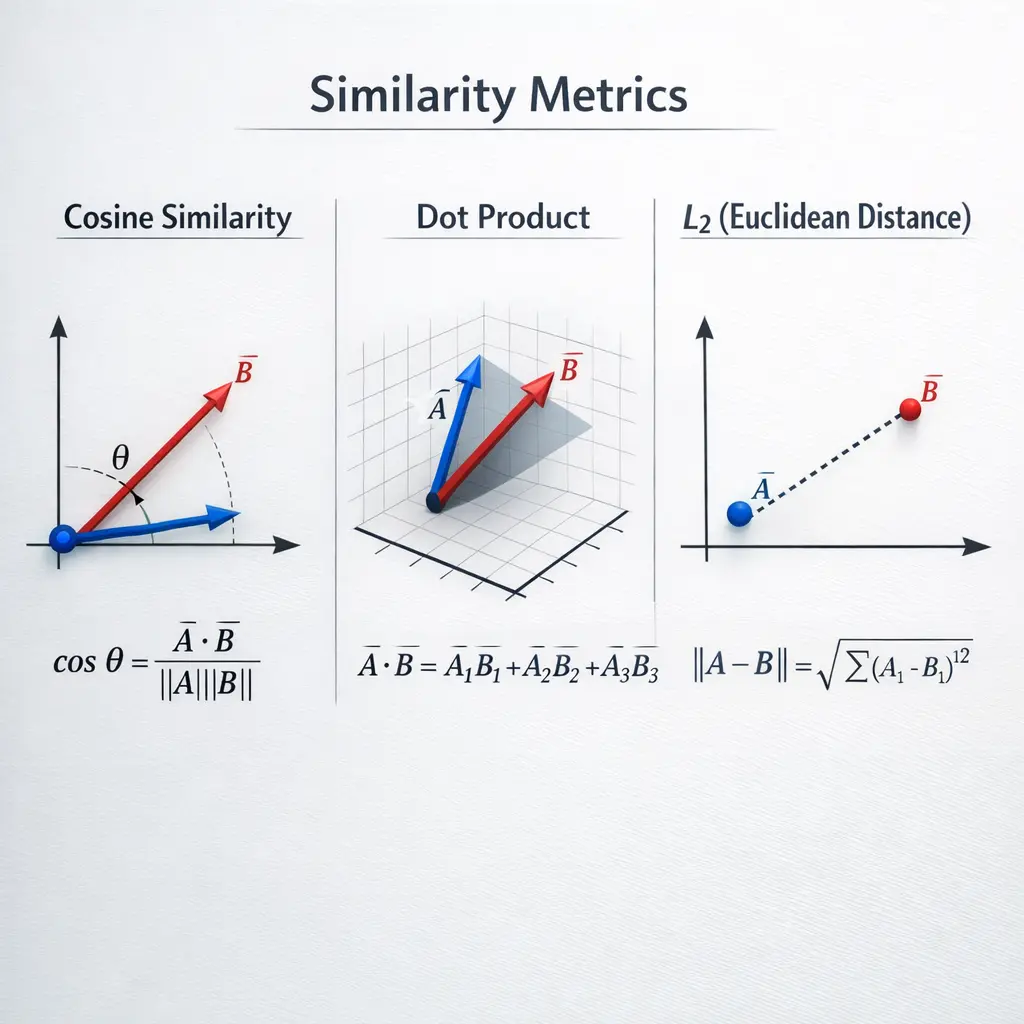

Similarity metrics such as Cosine, Dot, and L2 are mathematical tools used in advanced Retrieval-Augmented Generation (RAG) techniques to measure how alike two vectors (representing text or data) are. Cosine similarity evaluates the angle between vectors, emphasizing direction rather than magnitude. Dot product measures the magnitude of projection, highlighting both length and alignment. L2 (Euclidean) distance calculates the straight-line distance between vectors, focusing on absolute differences in their values.

Similarity Metrics: Cosine, Dot, L2+50

Similarity metrics such as Cosine, Dot, and L2 are mathematical tools used in advanced Retrieval-Augmented Generation (RAG) techniques to measure how alike two vectors (representing text or data) are. Cosine similarity evaluates the angle between vectors, emphasizing direction rather than magnitude. Dot product measures the magnitude of projection, highlighting both length and alignment. L2 (Euclidean) distance calculates the straight-line distance between vectors, focusing on absolute differences in their values.

💡 Key Takeaways

- Understand cosine similarity and how the angle between vectors reflects similarity.

- Learn how to compute the dot product and how it relates to vector magnitude and alignment.

- Understand L2 (Euclidean) distance as a magnitude-based measure of difference between vectors.

- Know when to choose cosine, dot, or L2 in real tasks like text similarity, image features, or clustering.

❓ Frequently Asked Questions

What is cosine similarity?

Cosine similarity measures how close two vectors point in the same direction, ignoring their magnitudes. It equals (a · b) / (||a|| · ||b||) and ranges from -1 (opposite) to 1 (same direction).

How do you compute cosine similarity between two vectors?

Compute cos_sim = (a · b) / (||a|| × ||b||). If either vector is zero, the value is undefined; in practice, handle this case (e.g., set to 0 or skip).

What is the dot product and how does it relate to similarity?

The dot product a · b = sum of a_i × b_i measures alignment. Larger values indicate more alignment when magnitudes are similar, and it is a component used in computing cosine similarity.

What is L2 distance and how is it used for similarity?

L2 distance (Euclidean distance) is sqrt(sum (a_i − b_i)²). Smaller distances mean greater similarity. Unlike cosine, it depends on vector magnitudes; you can convert it to a similarity score with transformations like 1/(1+distance).

When should you choose cosine vs L2 vs dot product?

Choose cosine when only the direction of vectors matters (e.g., text embeddings). Use L2 distance when magnitude differences are meaningful. Dot product is fast and useful in linear models, but is sensitive to vector length unless vectors are normalized.