Text Chunking and Overlap Heuristics+50

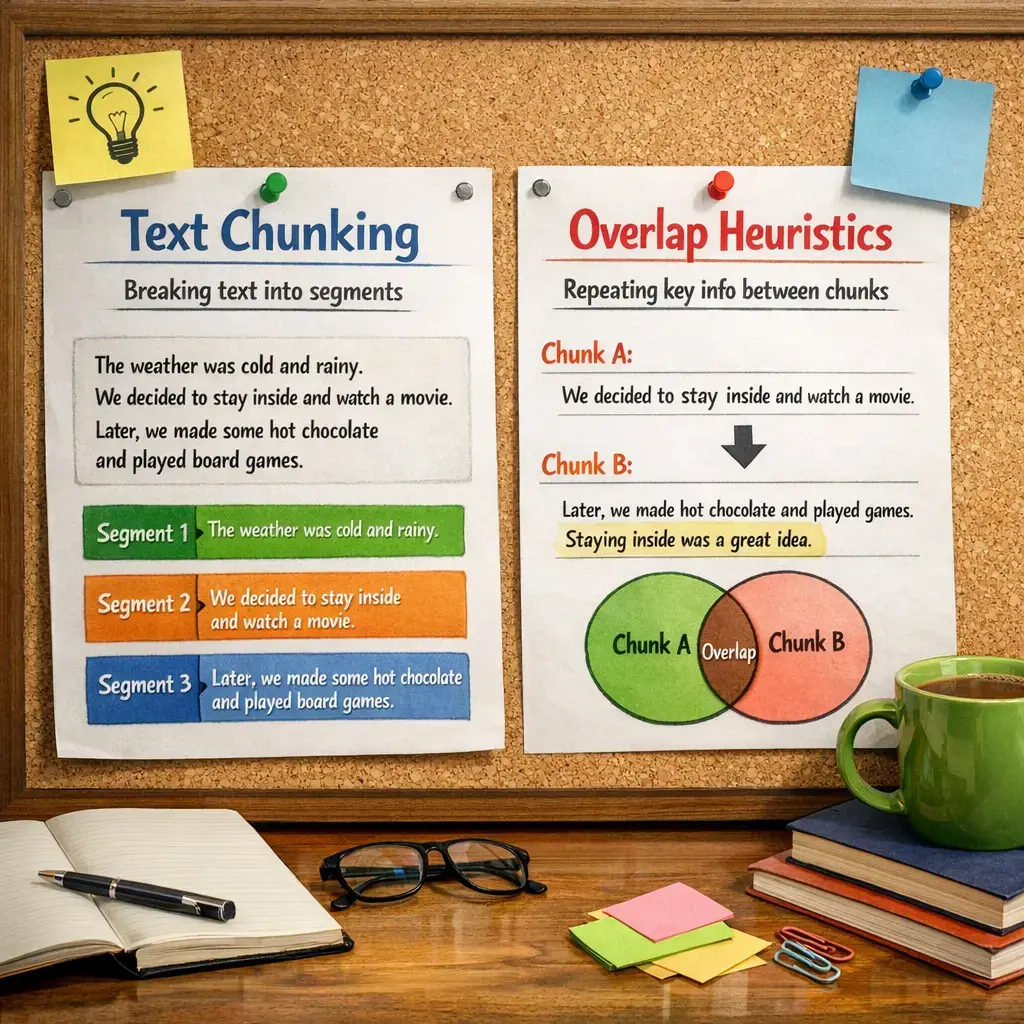

Text chunking and overlap heuristics are advanced Retrieval-Augmented Generation (RAG) techniques used to improve information retrieval from large documents. Text chunking divides documents into manageable segments, making it easier for retrieval models to process relevant content. Overlap heuristics ensure that important contextual information is preserved between chunks by allowing partial repetition of content across neighboring chunks. Together, these methods enhance retrieval accuracy and the quality of generated responses in RAG systems.

Text Chunking and Overlap Heuristics+50

Text chunking and overlap heuristics are advanced Retrieval-Augmented Generation (RAG) techniques used to improve information retrieval from large documents. Text chunking divides documents into manageable segments, making it easier for retrieval models to process relevant content. Overlap heuristics ensure that important contextual information is preserved between chunks by allowing partial repetition of content across neighboring chunks. Together, these methods enhance retrieval accuracy and the quality of generated responses in RAG systems.

💡 Key Takeaways

- Understand what text chunking is and why it's used in NLP

- Learn how overlap between chunks helps preserve context across boundaries

- Explore common heuristics for choosing chunk size and overlap

- Assess the trade-offs of overlapping versus non-overlapping chunks for tasks like classification, translation, and summarization

❓ Frequently Asked Questions

What is text chunking in NLP?

Text chunking splits long text into smaller, meaningful pieces to fit processing limits and simplify analysis.

What are overlap heuristics in text chunking?

Overlap heuristics decide how much context to carry between adjacent chunks to avoid losing information at boundaries.

How should I choose chunk size and overlap length?

Match the chunk size to your model's maximum input, and pick an overlap that balances context with redundancy (e.g., 20–50% of the chunk size).

What are common pitfalls of chunking with overlap?

Too little overlap can break phrases or dependencies; too much overlap adds redundancy and slows processing.