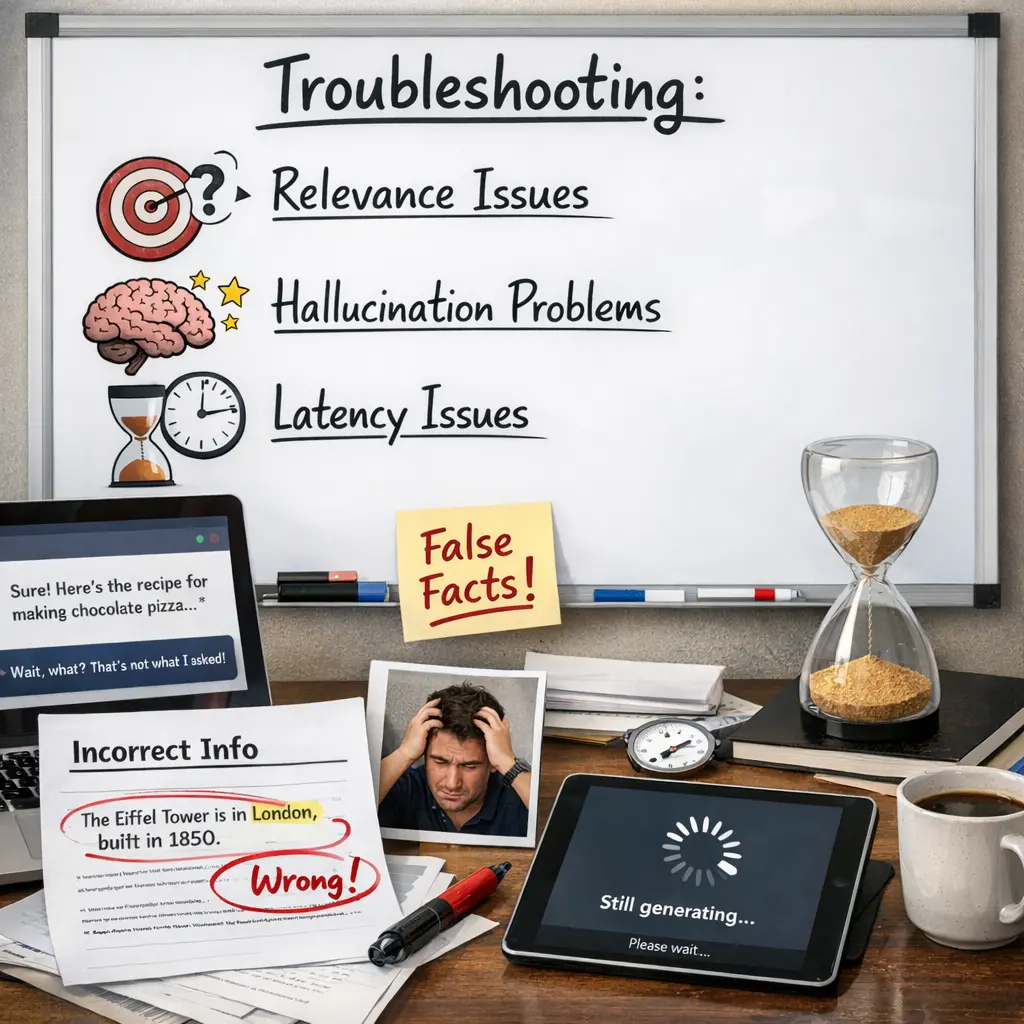

Troubleshooting: Relevance, Hallucination & Latency Issues+40

Troubleshooting: Relevance, Hallucination & Latency Issues+40

💡 Key Takeaways

- Understand how Retrieval-Augmented Generation (RAG) combines a retriever and a generator to ground answers in external documents.

- Learn methods to evaluate and boost the relevance of retrieved information to ensure responses stay on topic.

- Identify common causes of hallucinations in RAG (e.g., fabrications, misalignment) and apply mitigation techniques like reranking and citation verification.

- Explore latency drivers in RAG pipelines (retrieval time, model inference) and practical optimizations such as indexing, caching, batching, and using smaller models.

❓ Frequently Asked Questions

What is Retrieval-Augmented Generation (RAG)?

RAG combines a language model with a retrieval system that fetches relevant documents and conditions the answer on those sources.

How does RAG improve relevance and reduce hallucinations?

Grounding answers in retrieved documents helps the model stay aligned with real content; the quality of the retrieval determines how well this grounding works.

What causes latency in a RAG setup and how can it be reduced?

Latency comes from retrieving documents and generating text. Reduce it with caching, efficient vector indexes, fewer retrieved docs, streaming generation, and batching.

How can I measure and improve answer relevance in RAG?

Use metrics like recall@k or precision@k, and consider human checks. Improve relevance by better retrievers, higher-quality sources, and tuned generation settings.

What are best practices for using RAG in quizzes?

Use trusted sources, keep knowledge bases updated, provide citations, monitor accuracy, and set expectations that retrieved content informs answers.